When Your AI Tool Becomes the Attacker's Front Door

The Vercel Breach, OAuth Trust Abuse, and Why Awareness Training Is Not a Security Boundary

Date: April 21, 2026 Primary Sources: Vercel Security Bulletin (Vercel) · The Hacker News (THN)

Executive Summary

- What: A compromised third-party AI tool (Context.ai) gave attackers access to a Vercel employee's Google Workspace account via OAuth token abuse — no malware executed on Vercel systems

- Who is affected: Any organization whose employees have granted broad OAuth permissions to third-party AI tools, browser extensions, or SaaS integrations

- Severity: High — attacker accessed internal environments and non-sensitive environment variables; sensitive vars and npm packages were not compromised

- Action required: Audit third-party OAuth grants across your organization, rotate credentials for affected services, and enforce approved-only execution policy to ensure identity compromise cannot become unrestricted code execution

Introduction

In April 2026, Vercel disclosed a security incident that followed a path now becoming familiar in the modern threat landscape: an attacker did not break through a firewall or exploit a zero-day vulnerability. Instead, they walked through a door that a trusted third-party AI tool had left open.

The incident originated at Context.ai — a third-party AI productivity tool used by a Vercel employee. A compromised OAuth token from that tool's Google Workspace app gave the attacker access to the employee's Vercel Google Workspace account, and from there, to some Vercel internal environments and non-sensitive environment variables.

No malware executed on Vercel systems. No npm packages were tampered with. The attacker moved through trust relationships, not through code exploits.

That distinction is the central lesson. And it is why human awareness training — while valuable as a support control — cannot carry the primary burden of preventing this class of attack.

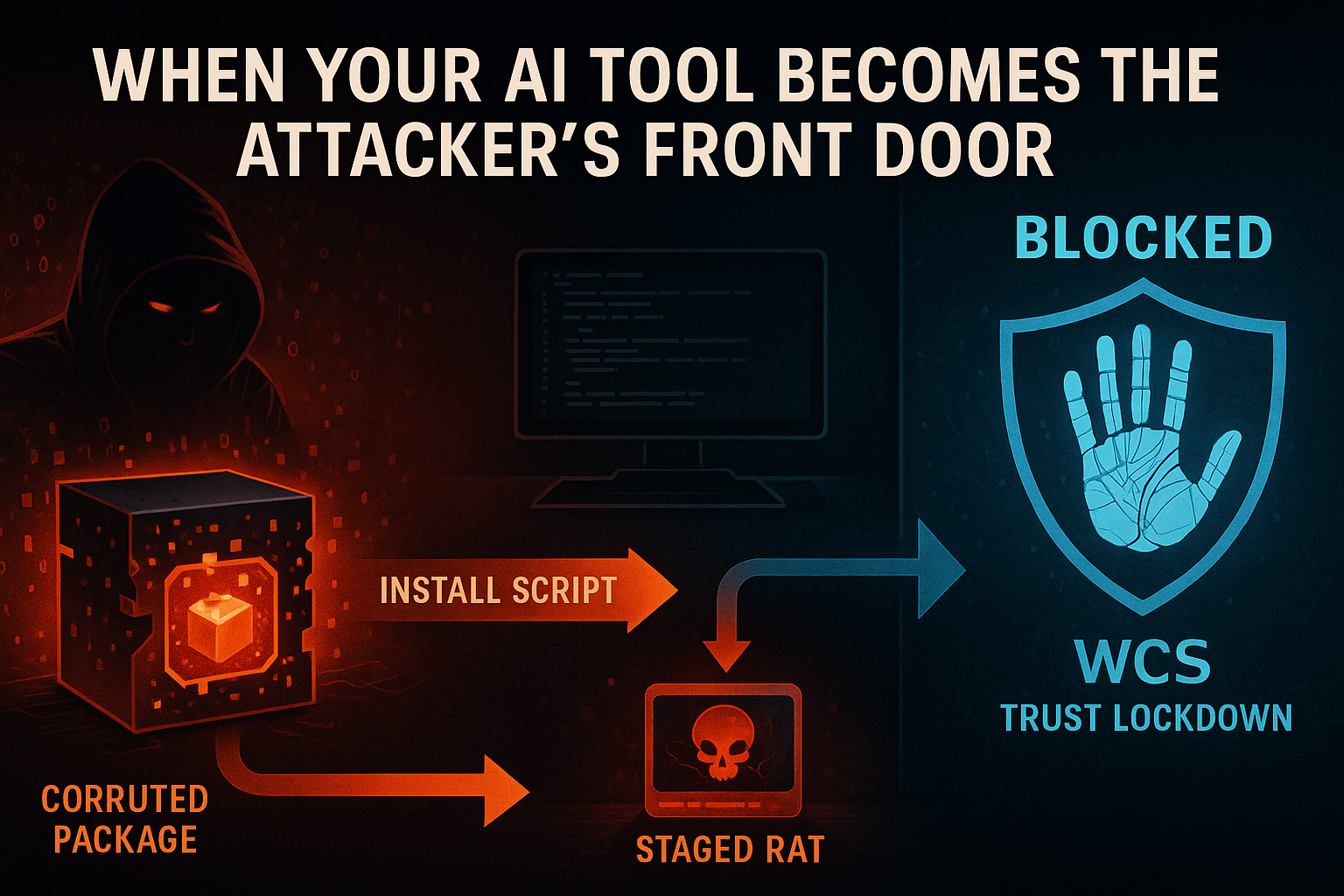

White Cloud Security (WCS) Zero-Trust Execution Control would have interrupted this breach at the critical moment: when an attacker with a compromised identity attempts to progress from access to action. This post explains where that control fits and why approved-only execution changes the outcome.

The central thesis: Awareness training is a support control, not a security boundary. At some point, a user will approve the wrong OAuth consent, authenticate the wrong app, or interact with a convincing AI-assisted lure. The right answer is not more hope. The right answer is Zero-Trust prevention controls that hold even when a human makes the wrong trust decision.

What Happened in the Vercel Incident

The full breach chain spans three organizations and two months:

February 2026: A Context.ai employee was infected with Lumma Stealer — a commodity credential-harvesting malware delivered via game exploit downloads (specifically, Roblox auto-farm scripts). The malware harvested the employee's corporate credentials, including Google Workspace access tokens and service keys for Supabase, Datadog, and Authkit. (THN)

March 2026: The attacker used the stolen credentials to access the [email protected] account, which enabled privilege escalation within Context.ai's infrastructure. Context.ai identified unauthorized AWS access and moved to block it. On March 27, 2026, the Context.ai Chrome extension was removed from the Chrome Web Store. (THN)

April 2026: Vercel disclosed the full incident. The attacker had used a compromised OAuth token — tied to Context.ai's Google Workspace OAuth application — to take over a Vercel employee's Google Workspace account. That employee had previously signed into Context.ai's AI Office Suite with broad "Allow All" permissions. (Vercel) (THN)

Using that Google Workspace access, the attacker gained entry to some Vercel internal environments and accessed environment variables that were not marked as sensitive. (Vercel)

What Was — and Was Not — Compromised

| Category | Status |

|---|---|

| Non-sensitive environment variables | Accessed — limited subset of customers affected |

| Sensitive environment variables | Not accessed — stored encrypted, Vercel found no evidence of access |

| npm packages published by Vercel | Not compromised — verified with Microsoft, GitHub, npm, and Socket |

| Vercel internal systems | Partially accessed — attacker demonstrated knowledge of Vercel systems |

Vercel engaged Mandiant and notified law enforcement. The company has since shipped product enhancements including updated sensitive environment variable defaults and recommends that affected customers rotate credentials. (Vercel)

A ShinyHunters persona publicly claimed responsibility and demanded $2 million for stolen data. Google Threat Intelligence Group assessed this claim as likely an imposter attempting to leverage an established criminal brand. (THN)

Why This Was More Than a Simple Account Compromise

At first glance, this looks like a standard account takeover. But the architecture of this breach reflects a much broader structural problem in how organizations have adopted AI tools.

The attacker did not need to:

- Phish the Vercel employee directly

- Exploit a Vercel vulnerability

- Compromise Vercel's network perimeter

- Know anything about Vercel's internal security posture ahead of time

They compromised a vendor, used that vendor's OAuth relationship, and walked laterally into a customer environment through a trusted authentication path.

This is OAuth as lateral movement — a phrase coined after this incident by Jaime Blasco, CTO of Nudge Security. (THN) The attacker did not need credentials to Vercel. They needed credentials to something Vercel trusted.

As SaaS ecosystems expand, every third-party tool an employee authenticates with — every AI assistant, every productivity plugin, every browser extension granted broad Google Workspace permissions — becomes a potential pivot point. The attack surface is no longer just your systems. It includes the entire ecosystem of tools your employees trust.

Why Human Training Alone Is Not Enough

The Vercel employee who granted broad permissions to Context.ai's AI Office Suite was not negligent. They used a tool their organization approved. The OAuth consent screen did not announce "this will eventually give an attacker access to your Vercel environments." It asked for permissions that seemed reasonable for the tool's stated purpose.

Security awareness training addresses human decision-making under normal conditions. But:

- AI-generated phishing and impersonation are increasingly indistinguishable from legitimate communications

- OAuth consent flows are designed to look trustworthy — and the consent screen for a compromised app looks identical to the consent screen for a clean one

- Lateral movement through vendor relationships bypasses the employee's decision entirely after initial consent is granted

- Lumma Stealer harvested credentials silently in the background — the Context.ai employee whose machine was infected may not have done anything obviously wrong

The question is not whether users can be trained to make better decisions. They can — and they should be. The question is whether better decisions are a sufficient security boundary in an environment where:

- Attackers use AI to personalize lures at scale

- Legitimate-looking OAuth consent flows hide downstream access implications

- Compromised vendors carry trusted access into downstream customers

They are not.

| Approach | What It Does | What It Cannot Do |

|---|---|---|

| Security awareness training | Improves human judgment under normal conditions | Cannot protect against AI-crafted personalized attacks; cannot undo OAuth grants already made; cannot stop post-compromise attacker movement |

| Zero-Trust Execution Control | Enforces an execution boundary that holds regardless of human decisions or identity compromise | Requires policy definition and ongoing management |

Training tries to improve human choices. Zero-Trust Execution Control reduces the damage humans can cause when they make the wrong choice. In the AI era, organizations need both — but prevention must carry the primary burden.

The AI-Era Phishing and OAuth Problem

The Vercel breach illustrates a convergence of two trends that individually would have been manageable, but together create a structural gap in most organizations' defenses.

Trend 1 — AI-enhanced social engineering: Attackers now use AI to generate highly personalized phishing messages, impersonation content, and context-aware lures that match the target's role, language, and current projects. The era of obvious misspellings and awkward phrasing is over. An AI-generated credential phish targeting a developer looks like a legitimate GitHub notification, CI/CD alert, or teammate message.

Trend 2 — OAuth as the new attack surface: Modern SaaS ecosystems are held together by OAuth trust relationships. Every app a user authenticates with — every AI assistant, every browser plugin, every integrated tool — holds a token that may carry significant access. When any link in that chain is compromised, the attacker inherits the permissions that link was granted.

The combination means:

- The attack surface is no longer just your network or endpoints — it is every SaaS relationship your employees have created

- Attackers can pivot from a compromised vendor into a customer environment without ever touching the customer's perimeter

- The initial access vector may be invisible to the target organization until after the damage is done

"Once an attacker compromises a trusted identity, the question is no longer whether the user was trained well enough. The question is whether your environment still enforces Zero-Trust execution boundaries that prevent unapproved software from running — regardless of who launched it."

Where White Cloud Security Zero-Trust Execution Control Fits

This is the most consequential enforcement layer for the post-compromise phase — and it is the control that changes the outcome of the Vercel breach.

After a privileged account or identity is compromised, attackers typically attempt to progress from access to action — and that progression almost always involves running something:

- Remote administration tools

- Credential dumping utilities (e.g., Mimikatz variants)

- Script engines invoked with attacker-supplied payloads

- LOLBins (legitimate OS binaries) abused for attacker purposes

- Droppers and stagers for follow-on malware

- Persistence mechanisms and scheduled task scripts

- Reconnaissance utilities

- Binaries or scripting engines that were never approved by the organization

White Cloud Security Zero-Trust Execution Control enforces a default-deny, approved-only execution policy at the endpoint level. No software executes unless it has been explicitly approved — regardless of:

- Whether the initiating account is an administrator

- Whether the process was launched by a privileged or compromised identity

- Whether the attacker has stolen valid credentials

- Whether the binary appears legitimate or is newly introduced

This is the distinction that makes execution control qualitatively different from identity protection:

| Layer | What It Protects | What Happens When It Is Compromised |

|---|---|---|

| Identity protection (MFA, SSO) | Controls who can authenticate | When credentials are stolen, attacker impersonates the user |

| Infrastructure protection (perimeter, firewalls) | Controls network access | When bypassed via trusted OAuth, attacker accesses internal systems |

| White Cloud Security Execution Control | Controls what software can run | Attacker cannot execute unapproved tools regardless of identity or privilege level |

Even where identity is lost and infrastructure is reached, execution control can still stop the next stage.

Identity compromise must not automatically become code execution. Privilege must not automatically become permission to run whatever the attacker introduces. The least-privilege execution layer breaks the kill-chain at the point where access would otherwise become damage.

If an attacker operating under a compromised Vercel Google Workspace account attempted to introduce or execute tools that were not already approved in the White Cloud Security execution policy — scripts, remote admin utilities, credential dumpers, or any unauthorized binary — White Cloud Security blocks that execution. The breach changes from "attacker can do anything the compromised admin can do" to "attacker is confined by execution policy."

At White Cloud Security, we continue to track and report new hacking methods and tools — not just because of its immediate threat, but because patterns of reuse often expose the playbooks of these cybercriminal groups.

White Cloud Security blocks unauthorized software even when launched by a SYSTEM-level or administrator-level account.

You do not have to remediate what never ran.

How the Breach Kill-Chain Changes Under a White Cloud Security Prevention Model

The table below separates confirmed facts, common attacker follow-on behaviors, and White Cloud Security counterfactual prevention points.

Where White Cloud Security Execution Control Interrupts the Chain

| Attack Stage | Confirmed? | Effect of Execution Control |

|---|---|---|

| Lumma Stealer delivery at Context.ai | Confirmed | If deployed on Context.ai endpoints, blocks the Lumma Stealer binary from executing |

| OAuth token abuse / Workspace takeover | Confirmed | Does not directly prevent OAuth abuse — this is an identity-layer event |

| Vercel environment access | Confirmed | Does not prevent access — identity protection is responsible for this layer |

| Execution of unauthorized tools / scripts | Likely post-compromise risk | Blocks unapproved software regardless of attacker's privilege level |

| Credential dumping / lateral movement | Likely post-compromise risk | Blocks the tools used to escalate and move laterally |

| Persistence and C2 tooling | Likely post-compromise risk | Blocks execution of persistence mechanisms, RATs, and C2 agents |

What execution control addresses in this breach: The critical transition from access to action. An attacker with a compromised identity can read files and access environments. But the moment they try to run something — a credential dumper, a remote admin tool, a dropper, a script — execution policy decides whether that attempt succeeds or fails. Under White Cloud Security, it fails.

What execution control does not address: Execution control does not prevent the OAuth compromise itself, the credential theft at Context.ai, or the reading of environment variables via legitimate API access. Those are identity-layer and infrastructure-layer concerns. Execution control is the enforcement layer that prevents a compromised identity from becoming unrestricted code execution.

Why Prevention Beats Remediation

Vercel's response was exemplary by industry standards: Mandiant was engaged, law enforcement was notified, credential rotation guidance was issued, and product improvements were shipped within days. (Vercel) This is what good detection and response looks like.

But consider what detection and response could not undo:

- Non-sensitive environment variables for a limited subset of customers were already accessed

- The attacker had already demonstrated detailed operational knowledge of Vercel's systems

- The time between initial compromise (February 2026) and Vercel's disclosure (April 2026) was approximately two months

Detection responds to damage. Prevention stops damage from occurring.

White Cloud Security's enforcement model does not require Mandiant after the fact. It requires policy definition up front — and then it enforces those policies automatically, at execution time, without waiting for a signature update, a behavioral pattern match, or a threat intelligence feed to catch up.

| Dimension | Detection & Response | White Cloud Security Prevention Model |

|---|---|---|

| When protection activates | After activity is observed | Before execution is permitted |

| Dependency on threat intelligence | Critical | Not required |

| Dwell time | Measured in days to months | Zero — unapproved software never runs |

| Remediation required | Yes | Not required for blocked activity |

| Attacker tool execution | Possible before detection | Blocked at policy enforcement layer |

Key Takeaways

- The Vercel breach did not require a single line of malware on Vercel's systems. It moved through OAuth trust relationships — a pattern that is becoming standard attacker tradecraft.

- Awareness training is a support control, not a security boundary. A user who grants broad permissions to an AI tool is often not making an obviously wrong decision — they are making the decision the consent screen was designed to elicit.

- White Cloud Security Zero-Trust Execution Control is the enforcement layer that matters when identity is lost. Even if an attacker compromises a privileged account and reaches internal systems, they cannot execute unapproved software. Identity compromise does not become unrestricted execution.

- White Cloud Security's prevention model eliminates the need to remediate what never ran. The question is not whether users can be trained better. The question is whether your environment enforces Zero-Trust execution boundaries that hold even when a user makes the wrong trust decision.

References

- Vercel: April 2026 Security Incident Bulletin (Vercel)

- The Hacker News: Vercel Breach Tied to Context AI Hack (THN)

Further Reading

- ClickFix: The Social Engineering Attack That Turns Users Into Their Own Attackers

- Axios npm Supply Chain Attack: Sapphire Sleet and Zero-Trust Defense

- Why Informed CTOs and CFOs Use Trust Lockdown Instead of Detection-Based Security

- The Mincemeat Attack: Hidden Instructions, Trusted Content, and AI Deception